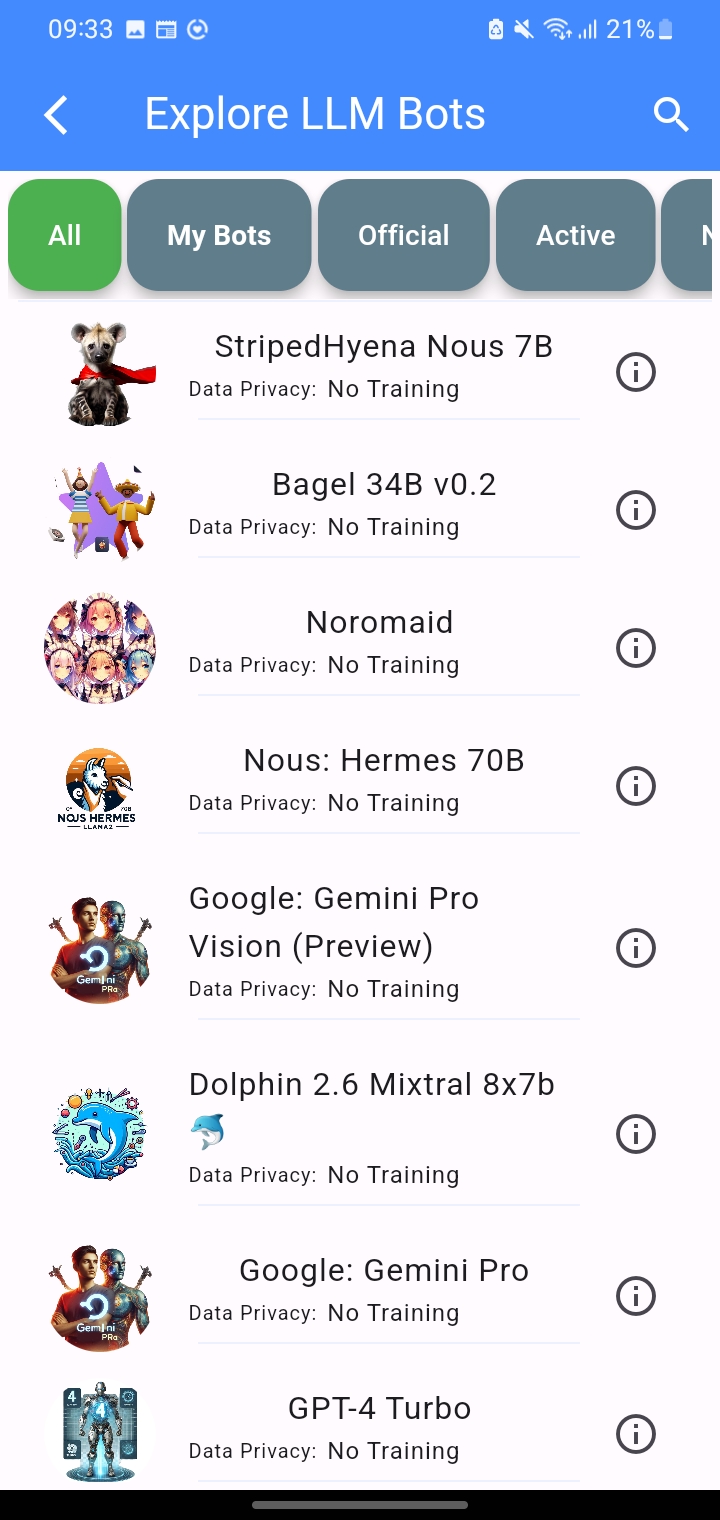

OUR APP FEATURES

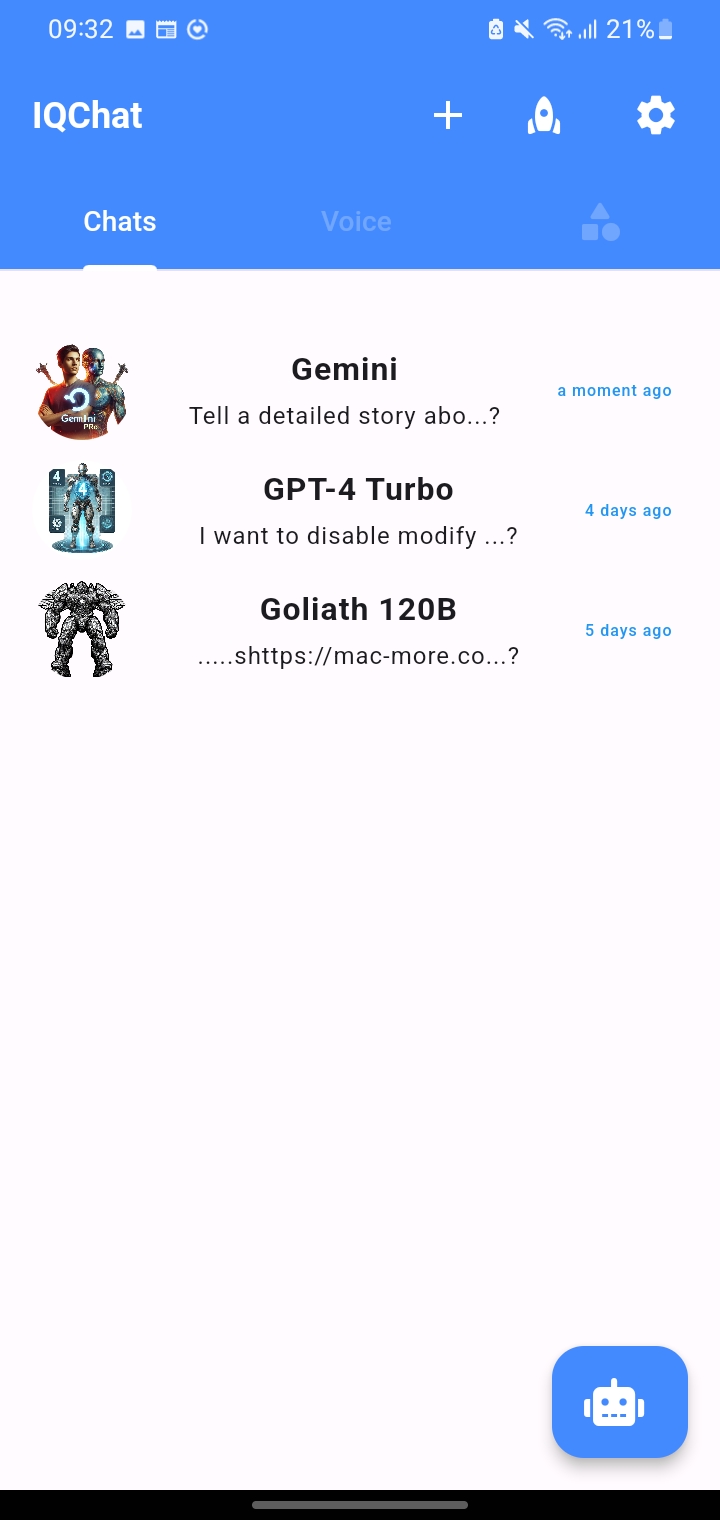

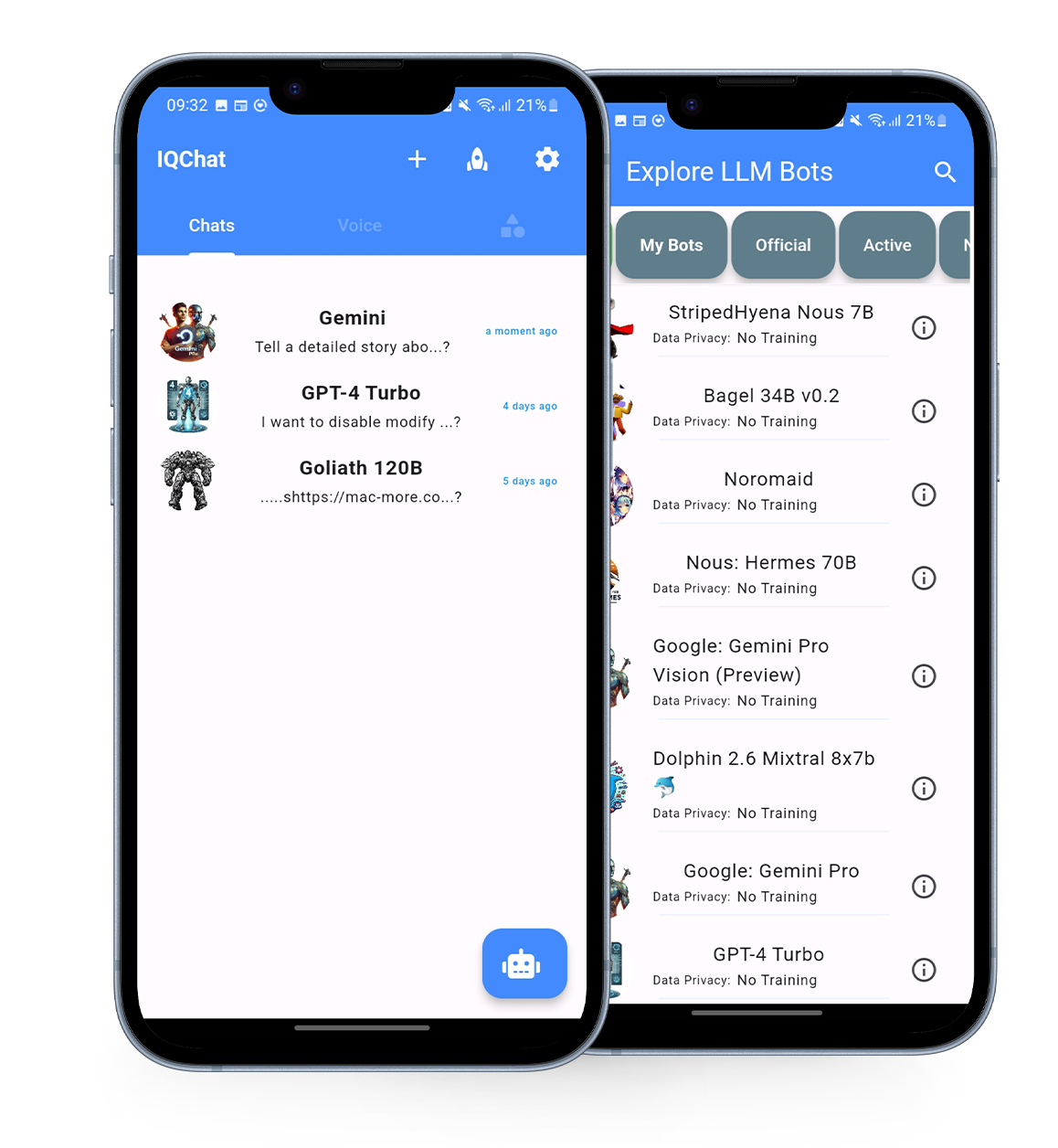

Custom selection of LLM engines

IQChat AI is backed by various Large language models, include AI LLMs like: Gemini Pro, Pygmalion: Mythalion 13B, Nous: Hermes, Llma 2, GPT-4, Palm 2, and Claude 2 , and more

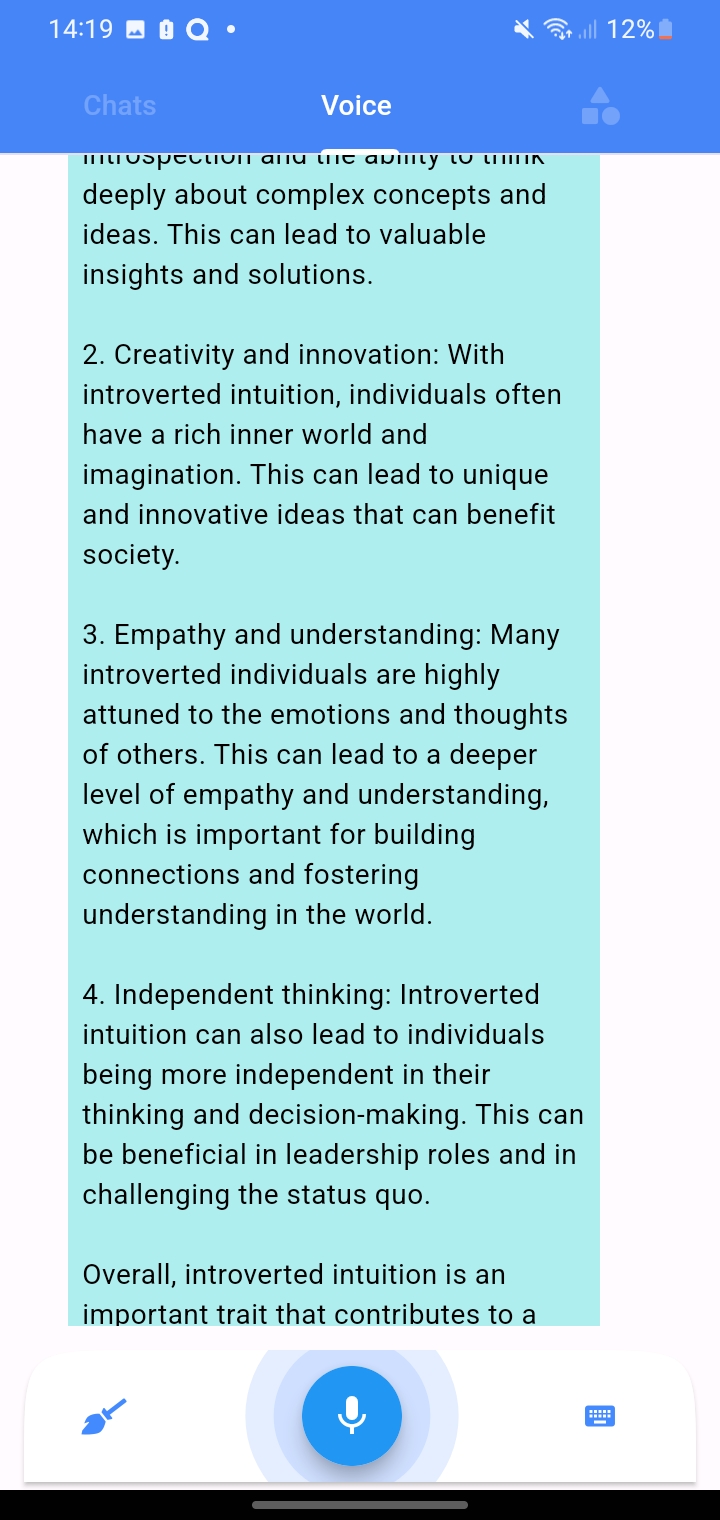

Voice enabled chat Assistant

An artificial intelligence-powered program designed to assist users through chat-based communication.

Multi-language support

IQChat AI Assistant can support multiple languages, allowing users to interact with the app in their preferred language.

Save Chat History

The "Save Chat History" feature allows users to store and access past conversations for future reference and review.

AI Image Creator

Generate image from text prompts.

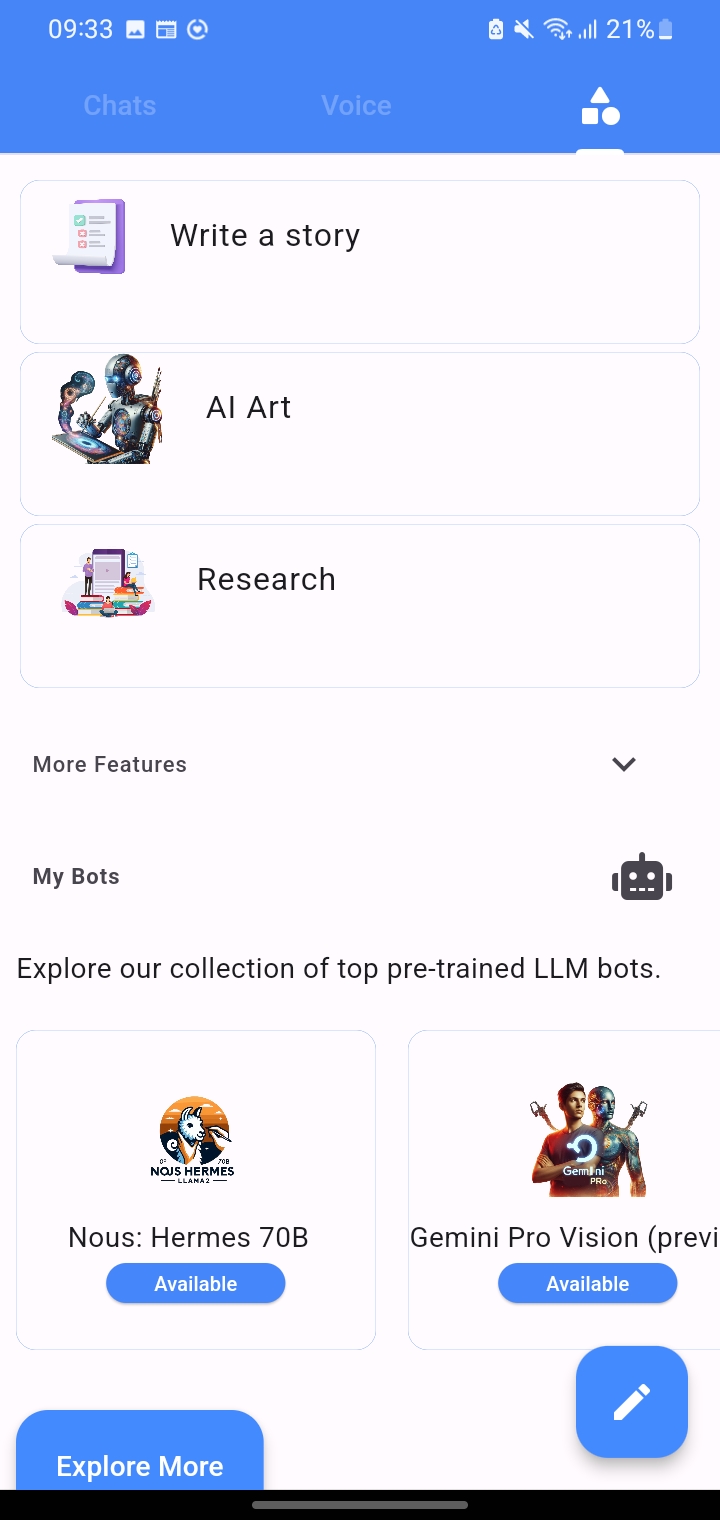

Create custom AI Assistant

On our app, you can create a personalized bot tailored to your needs.Select the ideal AI language model for your unique needs.

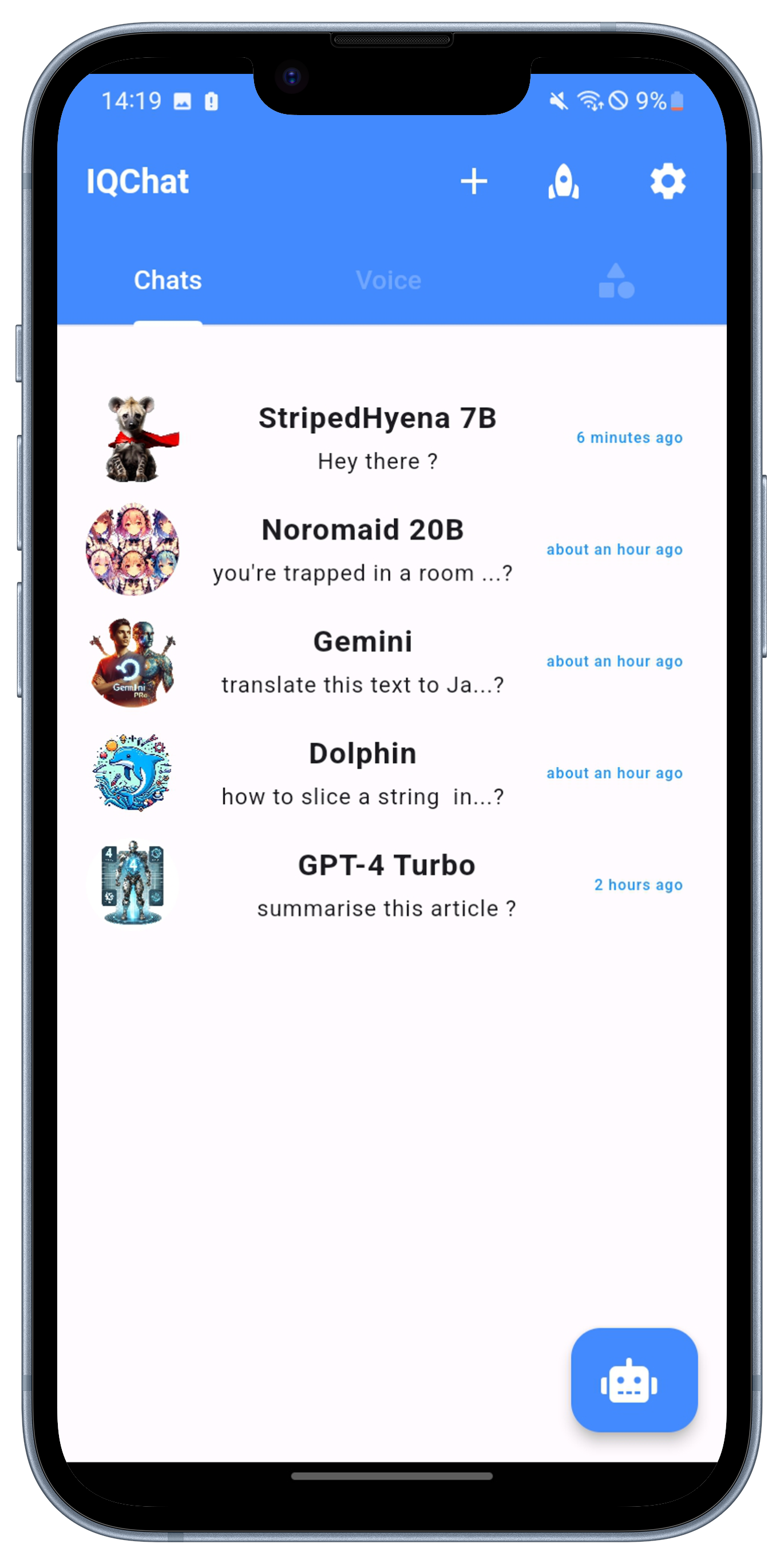

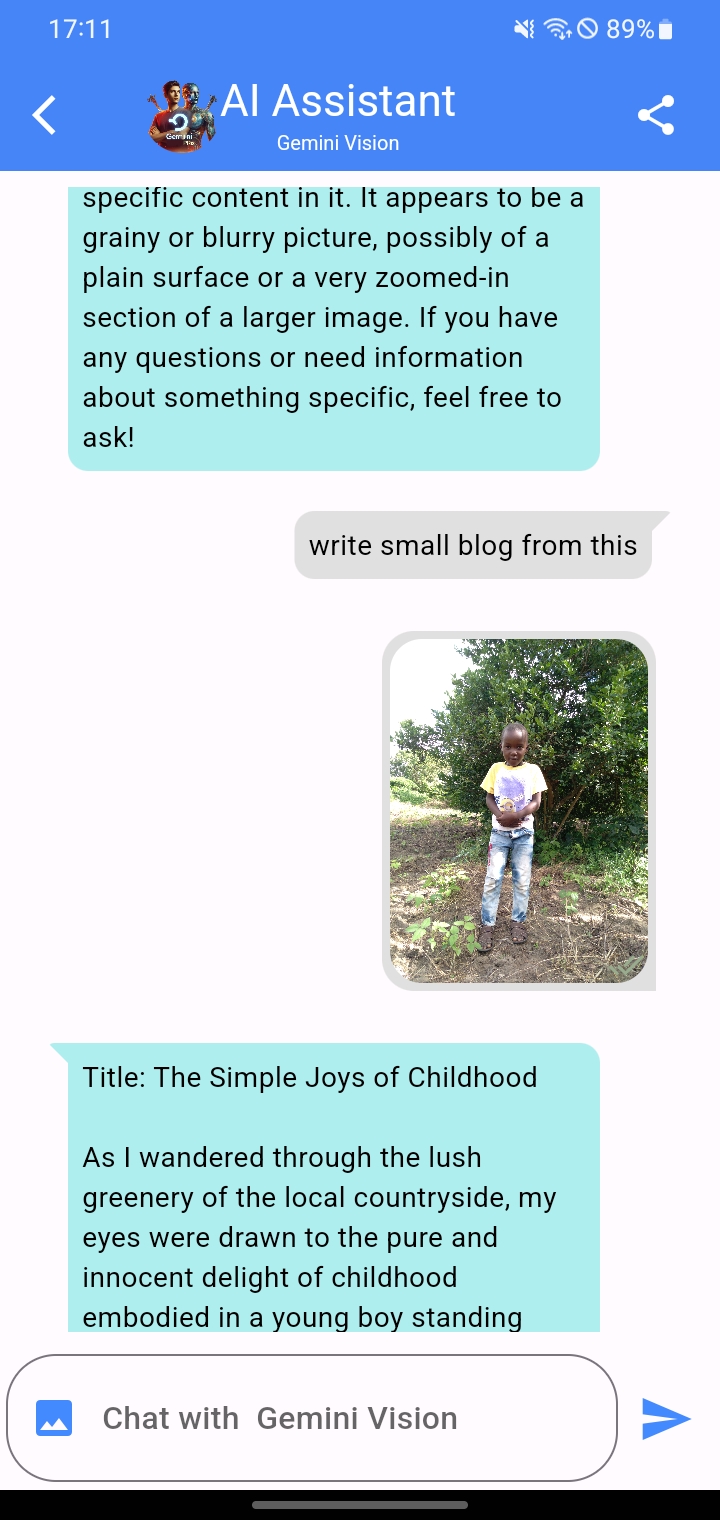

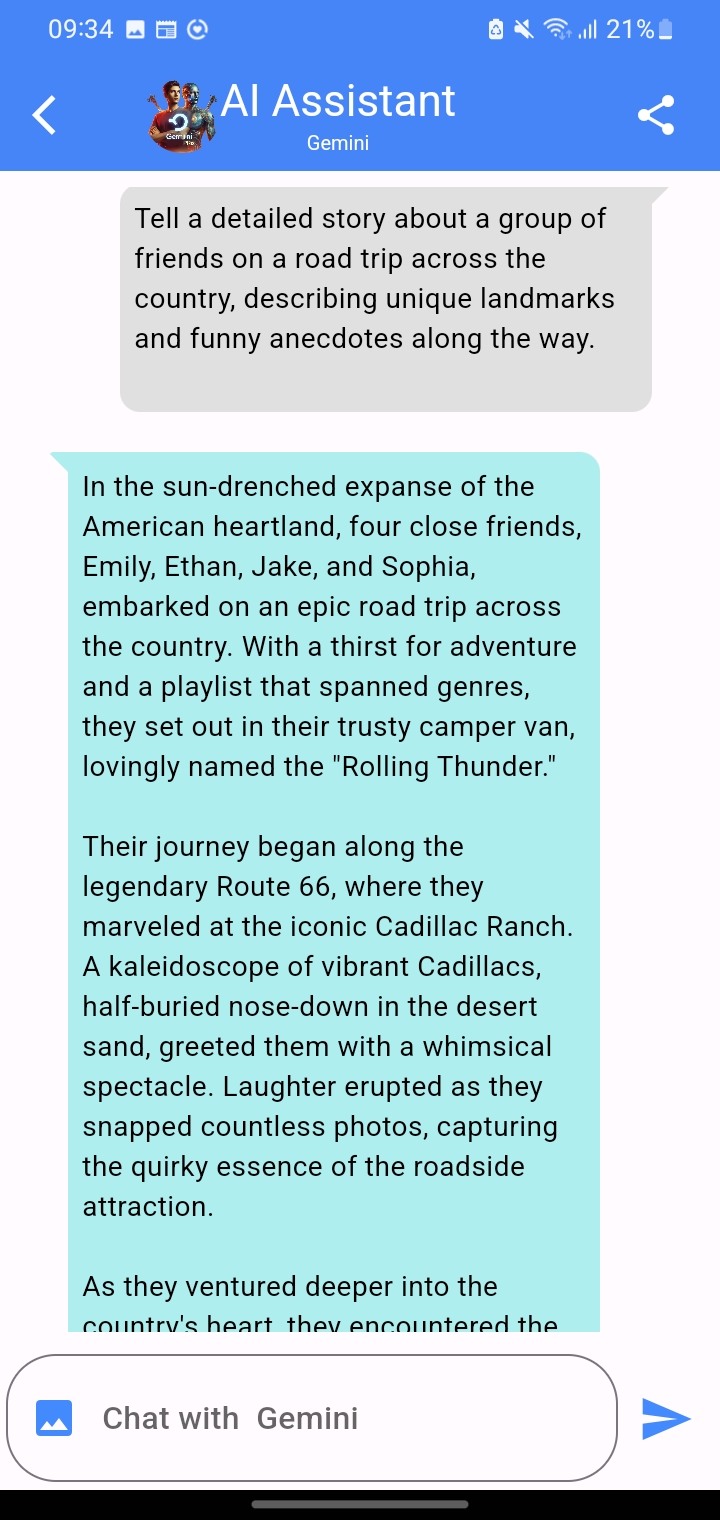

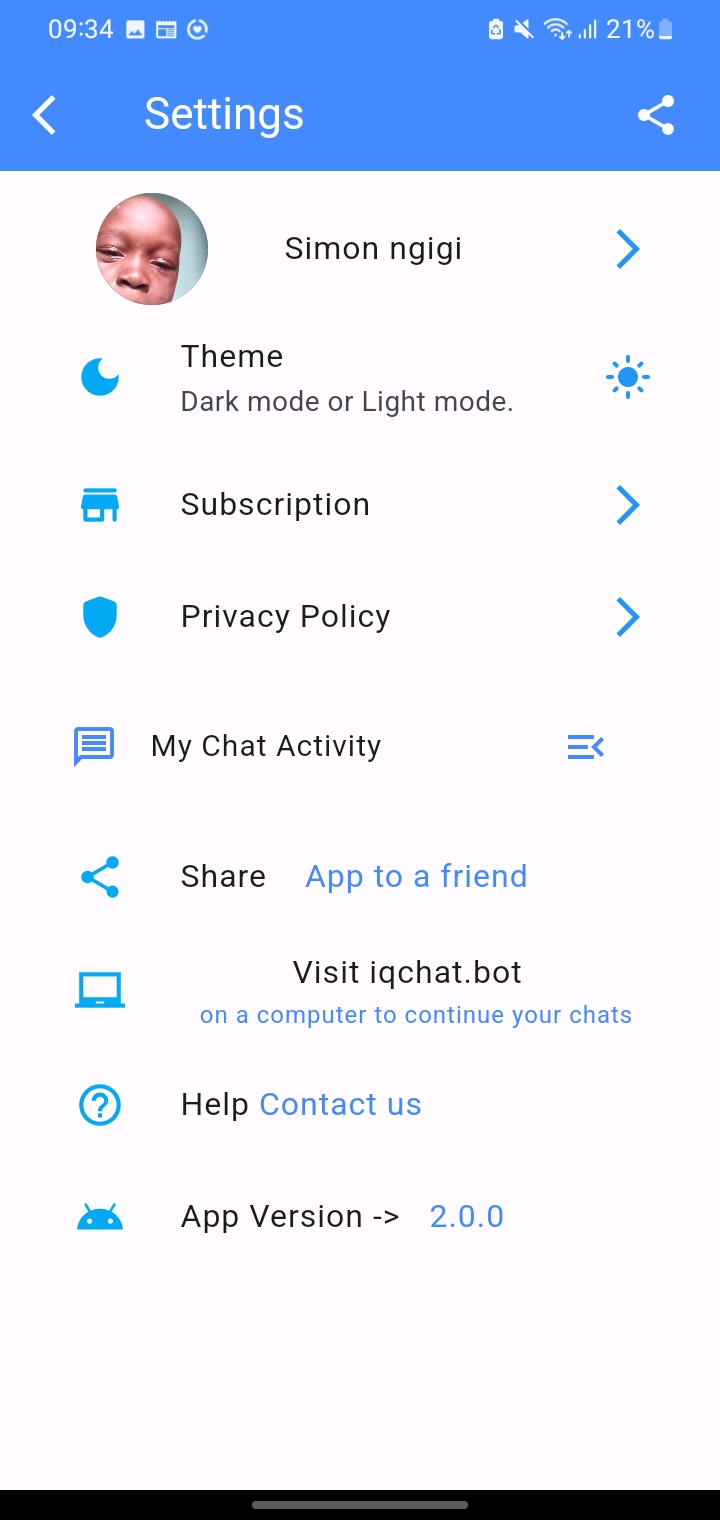

APP SCREENSHOT

"Get a glimpse of our app with these Screenshots"

GET THE APP

We're happy to announce the launch of IQChat App, our cutting-edge app, now available on the Google Play Store. This innovative platform is powered by a dynamic ensemble of over 20 large language model bots, carefully curated from both open source and closed source realms. At IQChat, we're dedicated to community involvement, ensuring a constant influx of new language models to elevate your user experience.

Download the app today.

FAQ

You can sign up with your email address or Google login. You will also need to create a password.

After creating an account, you will be prompted to set up the AI chat assistant. The app will guide you through a series of questions to help personalize the assistant to your needs.

Once the AI chat assistant is set up, you can start using it to perform tasks and answer questions.

That's it! With these steps, you should be able to set up and start using the IQChat AI Assistant app on your mobile device.

.png) IQChat App

IQChat App